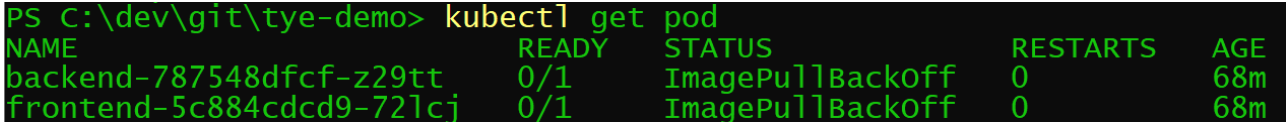

When deploying application to Azure Kubernetes Cluster, it can hapen that pods do not start for some unknown reason.

Once that hapen, services will not be able to start.

For example, you will also not be able to start port forwarding on the service related to that pod.

kubectl port-forward svc/frontend 5000:80

You can analyse the pod status with the following command:

PS > kubectl describe pod frontend-5c884cdcd9-72lcj

Name: frontend-5c884cdcd9-72lcj

Namespace: default

Priority: 0

Node: aks-agentpool-21750945-vmss000001/10.240.0.5

Start Time: Sun, 06 Dec 2020 13:01:37 +0100

Labels: app.kubernetes.io/name=frontend

app.kubernetes.io/part-of=tye

pod-template-hash=5c884cdcd9

Annotations: <none>

Status: Pending

IP: 10.244.0.7

IPs:

IP: 10.244.0.7

Controlled By: ReplicaSet/frontend-5c884cdcd9

Containers:

frontend:

Container ID:

Image: ****.azurecr.io/frontend:1.0.0

Image ID:

Port: 80/TCP

Host Port: 0/TCP

State: Waiting

Reason: ImagePullBackOff

Ready: False

Restart Count: 0

Environment:

DOTNET_LOGGING__CONSOLE__DISABLECOLORS: true

ASPNETCORE_URLS: http://*

PORT: 80

SERVICE__FRONTEND__PROTOCOL: http

SERVICE__FRONTEND__PORT: 80

SERVICE__FRONTEND__HOST: frontend

SERVICE__BACKEND__PROTOCOL: http

SERVICE__BACKEND__PORT: 80

SERVICE__BACKEND__HOST: backend

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from default-token-k548s (ro)

Conditions:

Type Status

Initialized True

Ready False

ContainersReady False

PodScheduled True

Volumes:

default-token-k548s:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-k548s

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning Failed 112s (x307 over 71m) kubelet Error: ImagePullBackOff

This issue happen if the kubernetes cluster is not granted to access the container registry (not only). This grant is created when creating the cluster. For example by using AZ by specifying argument --attach-acr:

az aks create

--resource-group myResourceGroup

--name myAKSCluster

--node-count 2

--generate-ssh-keys

--attach-acr

To allow an AKS cluster to interact with other Azure resources, an Azure Active Directory service principal is automatically created, since you did not specify one. Here, this service principal is granted the right to pull images from the Azure Container Registry (ACR) instance you created in the previous tutorial. Note that you can use a managed identity instead of a service principal for easier management.

https://docs.microsoft.com/en-us/azure/aks/tutorial-kubernetes-deploy-cluster